Inclusive AI &

Justice Tech

Building technology that serves communities, and holding technology accountable when it doesn't.

Technology is reshaping every dimension of public life, from how courts make decisions to how governments allocate resources. For communities already marginalized by structural discrimination, the stakes could not be higher.

ICAAD builds justice-tech tools that put data directly in the hands of frontline advocates, while simultaneously building human rights-centered frameworks for evaluating AI harm. Our approach starts from a simple premise: marginalized communities should have a say in how it is designed, deployed, and governed.

When AI Causes Harm, Who Is Accountable?

The Problem: Ethics are not Rights

The rapid expansion of AI has outpaced the systems designed to hold it accountable. Today, AI incident databases identify technical failures but don't connect them to any accountability framework. Corporations define their own voluntary ethics guidelines, effectively deciding for themselves what rights people have.

ICAAD is shifting AI governance from ethics to rights. HRightsAI is the first human rights-centered incident database that identifies who is being harmed and which specific human rights have been violated.

Read the AI Harm ReportThe Ten Harm Domains

Non-Discrimination

Algorithmic bias in hiring, lending, and social services.

Scientific Progress

Inequitable access to AI for the Global South.

Procedural Fairness

Due process in automated decision-making.

Privacy

Surveillance, data extraction, and lack of consent.

Meaningful Employment

Algorithmic management and displacement.

Freedom from Harm

Autonomous weapons and manipulative tech.

Freedom of Expression

Content moderation, censorship, and deepfakes.

Advocacy of Hatred

Algorithmic amplification of hate speech.

Assembly & Association

Surveillance of protest and social scoring.

Public Participation

Election manipulation and democratic interference.

Technology That Serves Communities

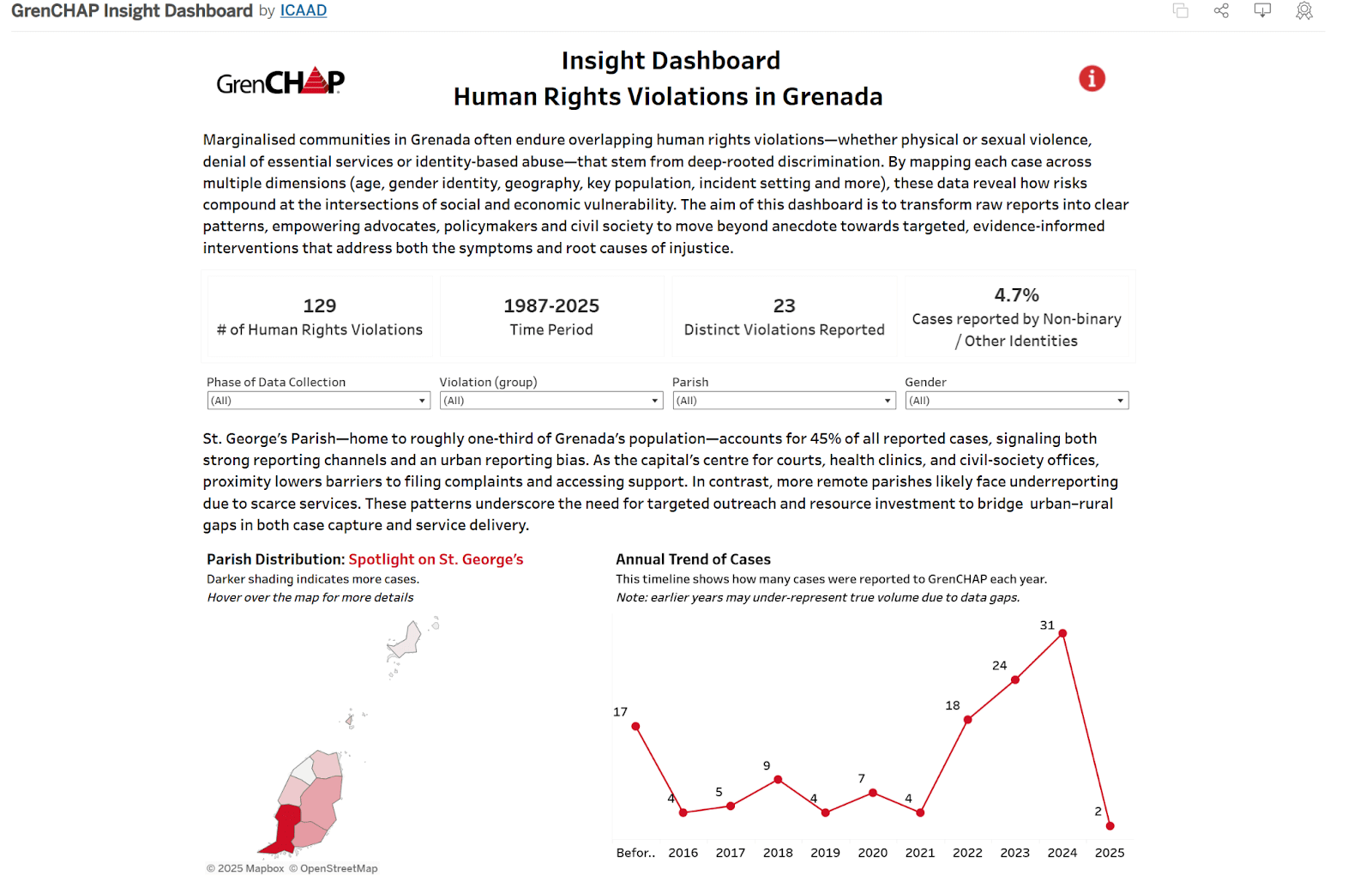

Case Study: GrenCHAP

GrenCHAP serves marginalized communities in Grenada, including LGBTQ+ people and persons living with HIV. ICAAD partnered with GrenCHAP to build an internal data system around their actual workflows, moving from paper records to real-time data entry.

In 2025, we co-created a public-facing dashboard, producing what Executive Director Kerlin Charles calls "narrative justice": using data to correct the silences that often define marginalized experiences.

"This transformation empowers us with instant analysis, improving both efficiency and accuracy."

— Kerlin Charles, Executive DirectorThe TrackGBV Model

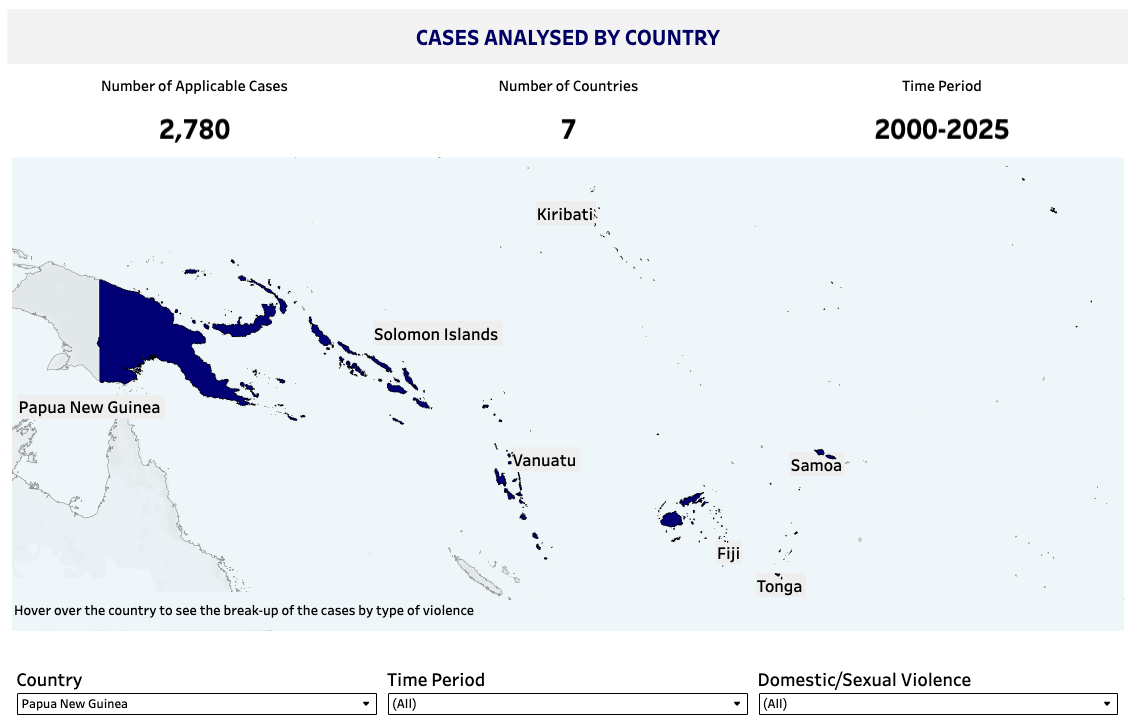

ICAAD's flagship justice-tech initiative tracks judicial bias across the Pacific. The system is now being automated through ImpartialAI, reducing analysis time from one hour per case to seconds.

EXPLORE GENDER JUSTICE PROGRAM →Get Involved

Whether you're building AI systems, governing them, or impacted by them, help us ensure human rights are at the center.

From Our Archive

ICAAD's first community-tech initiative, co-developed with health workers in Assam, India to map healthcare gaps.

Pilot Machine Learning (2016)Our early experiments in automating case law analysis, which informed the development of ImpartialAI.

Advocacy Online Advocacy CourseAccessible tools for advocates, including data and technology modules.

Machine Learning TrackSDGsUsing supervised machine learning to link UN human rights recommendations with the 17 SDGs.